Broadcom: AI Juggernaut or Priced for Perfection?

Consensus is positive... But it has cracks.

Market Sentiment Is Loud & Clear…

The market is underwriting Broadcom (AVGO) as a primary winner, structurally embedded into the foundation of AI infrastructure, with tailwinds that could last for years.

The market has a point…

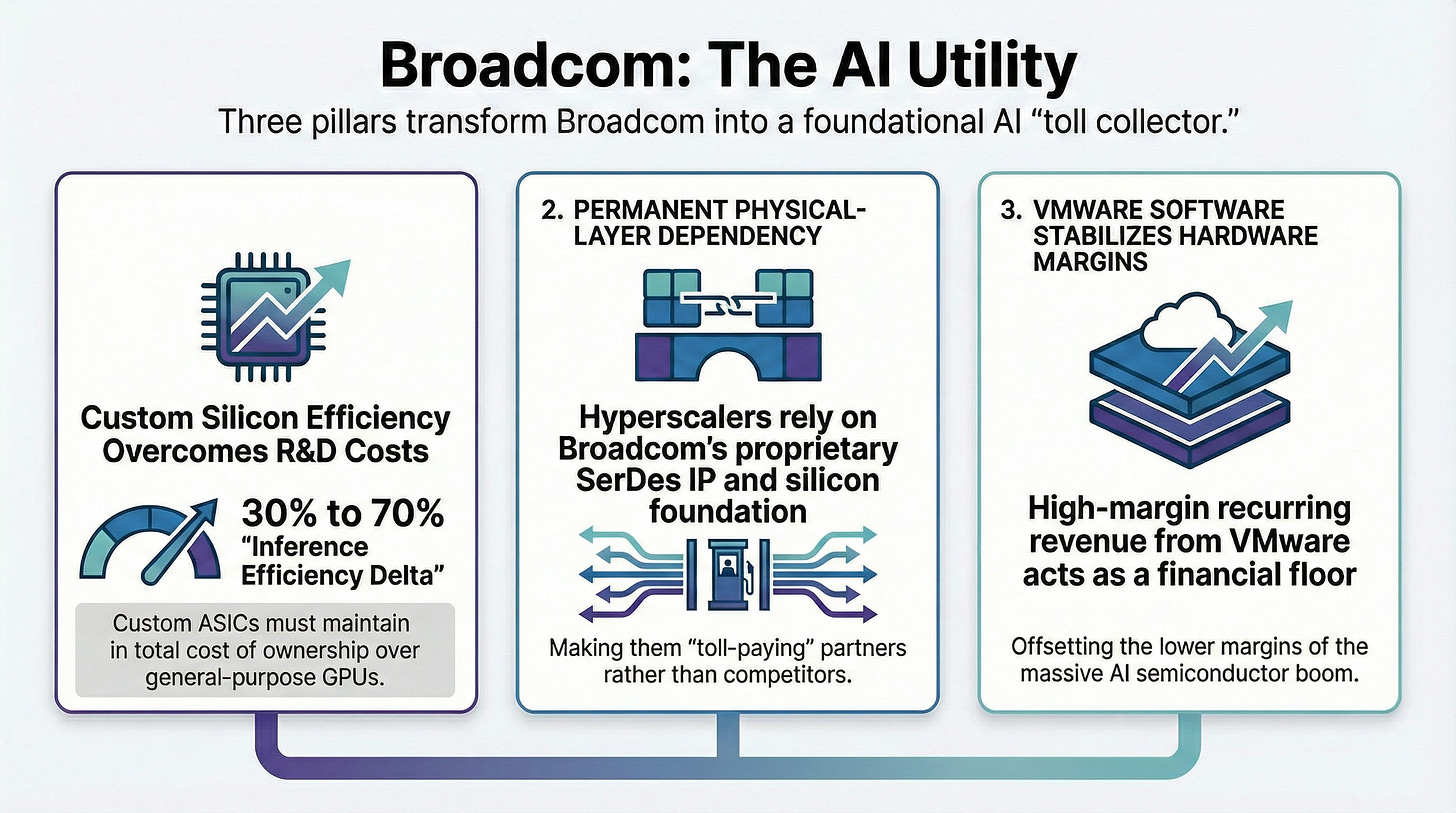

AVGO is embedded in the AI infrastructure. Hyperscalers depend on it for custom silicon, high-speed networking, and the physical layer IP that makes internal chips viable at scale. VMware adds recurring software cash flow that steadies results. In Q4 FY2025 (ended November 2, 2025), AVGO reported $18.0B in revenue (+28% YoY) and 74% YoY growth in AI semiconductor revenue.

For this consensus to hold, three specific mechanical assumptions must be true:

1. Inference demands specialization: The consensus assumes that at scale, the Total Cost of Ownership (TCO) advantage of custom chips over general-purpose GPUs is so large that it overcomes the massive R&D costs and rigidity of developing proprietary silicon.

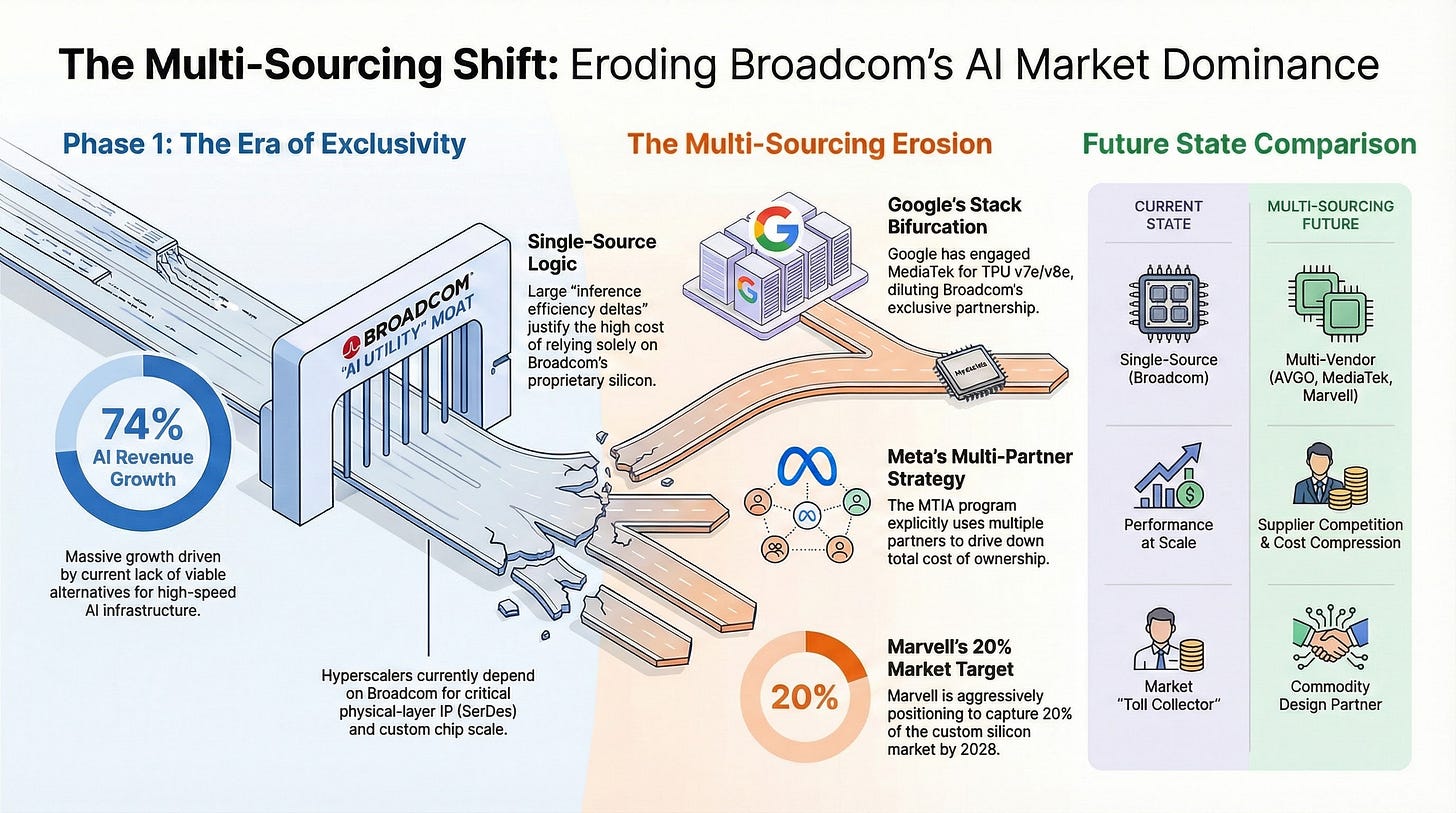

2. Hyperscalers are permanently dependent: It assumes that companies like Google and Meta cannot fully “verticalize” the physical layer of chip design. They must continue paying AVGO for its SerDes IP rather than developing it in-house or moving to lower-cost commodity design houses like Marvell (MRVL), which is aggressively targeting 20% of the custom silicon market by 2028.

3. Volume outweighs dilution: It assumes that the sheer scale of AI revenue dollars will compensate for their lower quality. Custom silicon has a much lower margin than VMware software; the consensus is that the software engine will hold firm to prevent the lower margin AI boom from dragging down the company’s overall blended profitability.

If the consensus holds, AVGO shifts from chip company to AI utility. Revenue becomes less exposed to semiconductor cyclicality, VMware’s cash helps build a fortress balance sheet, and custom silicon scales with global compute demand independent of which hyperscaler wins in the end.

AVGO isn’t picking the AI race winners; it’s collecting tolls from everyone who runs.

The stock decouples from semiconductor cycles and starts trading like a software compounder. AVGO isn’t picking the AI race winners; it’s collecting tolls from everyone who runs.

This Narrative Has Cracks…

What happens if the inference efficiency spread narrows or the revenue mix turns toxic?

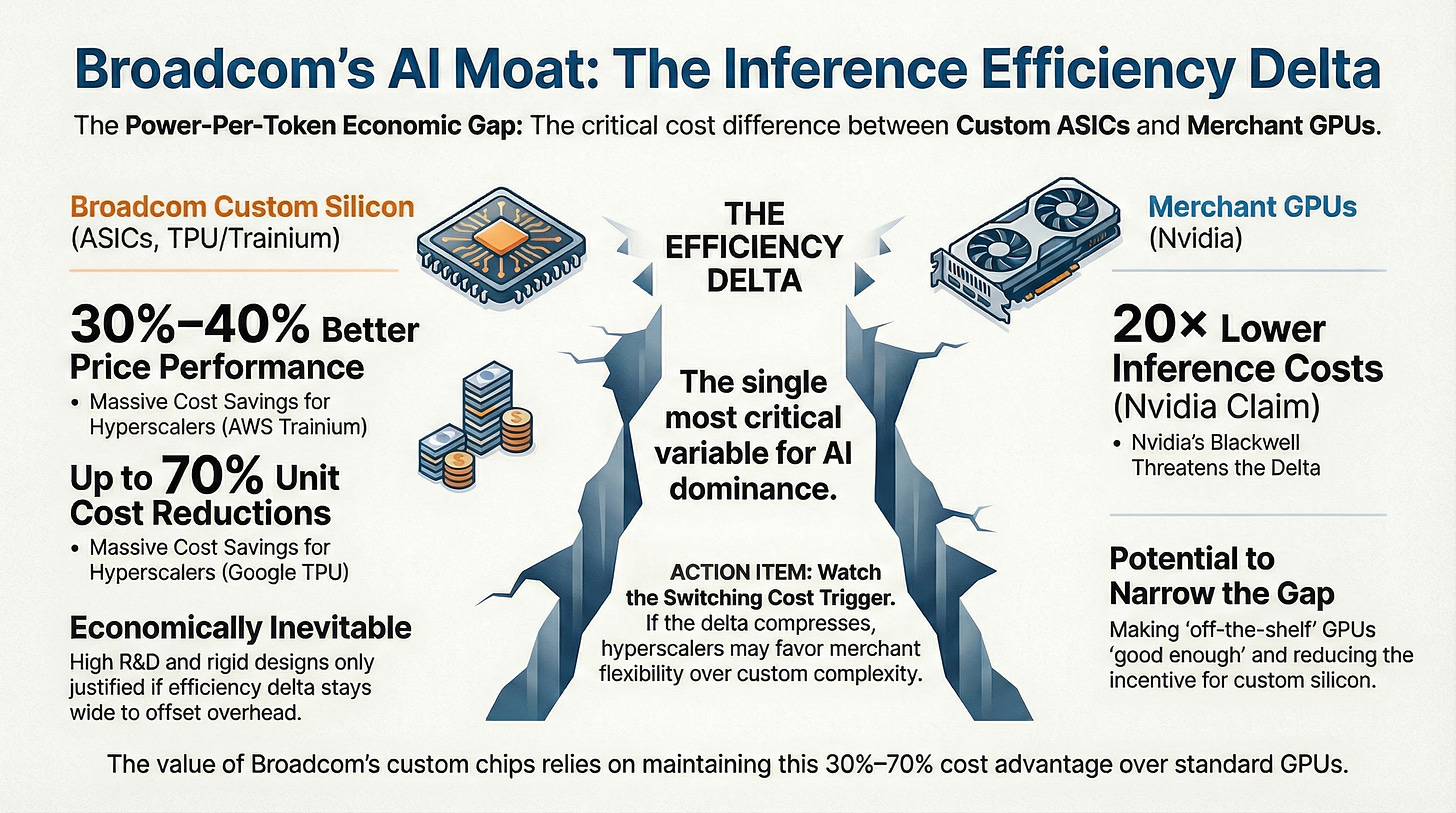

Let’s start with NVIDIA... If its next-generation GPUs offer inference performance deemed “good enough,” the economic justification for custom silicon evaporates. NVIDIA’s Blackwell architecture is marketed to deliver 20× lower inference cost and 25× higher token throughput versus Hopper. Those improvements narrow the performance gap that ASIC designers count on. Would a hyperscaler spend years co-designing ASICs with AVGO when off-the-shelf GPUs can deliver comparable cost-per-token? The answer depends on whether custom ASICs retain a durable inference efficiency delta versus the leading merchant GPU.

The debate ultimately comes down to engineering and economics, not sentiment.

The Key Variable: “Inference Efficiency Delta”

Inference efficiency delta is the gap in power-per-token cost between AVGO’s custom ASICs and the best available merchant GPU, measured in production conditions rather than lab benchmarks.

If the delta stays wide, like the 30% to 40% price performance advantage claimed for AWS Trainium 2 or the 70% per-unit cost reduction cited for Google TPU v5 and Trainium 3, custom programs remain economically inevitable. Hyperscalers can justify multi‑year design cycles, specialized software stacks, and the organizational overhead of bespoke hardware because the savings persist at scale.

If the delta compresses, procurement behavior changes. Even if custom silicon remains technically superior, “good enough” economics plus merchant flexibility can win. The switching costs are not only hardware. They are software, developer tooling, and the ability to redeploy capacity across shifting workloads.

This is why the debate ultimately comes down to engineering and economics, not sentiment. The stock is underwriting that the delta will remain wide enough for AVGO’s custom silicon and networking programs to compound across multiple generations.

Then there’s the dependency question. Assumption #2 requires that hyperscalers do not materially compress AVGO’s physical layer take rate over time. That assumption is already under pressure. Google has reportedly engaged MediaTek to support specific “efficiency” workloads (TPU v7e and v8e), effectively bifurcating the stack and diluting AVGO’s exclusivity. Meanwhile, Meta’s MTIA program spans multiple partners and is explicitly designed to deliver roughly 44% lower total cost of ownership than NVIDIA-based alternatives. The strategic direction is clear: hyperscalers are incentivized to multi-source, internalize more of the stack, and use vendor competition to compress supplier economics across successive generations.

The Margin Blend Risk: If the AI hardware ramp outpaces software stabilization, Broadcom risks a form of “hollow growth,” in which revenue expands even as margins deteriorate. This risk is already observable. Management has guided consolidated gross margins down by roughly 100 basis points for Q1 FY2026, citing a mix shift toward lower-margin AI revenue. If that trend accelerates, especially in an illustrative scenario where AI hardware accounts for 50%+ of revenue, the blended margin profile can drift lower even as the top line looks strong.

The VMware Floor Risk: The market’s willingness to value AVGO as a software-anchored compounder depends on VMware's continued ability to serve as a margin and cash flow floor. If renewal economics weaken, customer churn increases, or regulatory constraints limit monetization, the stabilizing engine becomes less reliable at the same time that the hardware mix is rising. This friction is evident in the recent AT&T lawsuit alleging unfair bundling practices and in EU antitrust scrutiny of licensing changes (such as the 72-core minimum). That combination is what drives sharp multiple compression.

If the margin floor or efficiency delta weakens, the re-rating risk becomes the dominant variable.

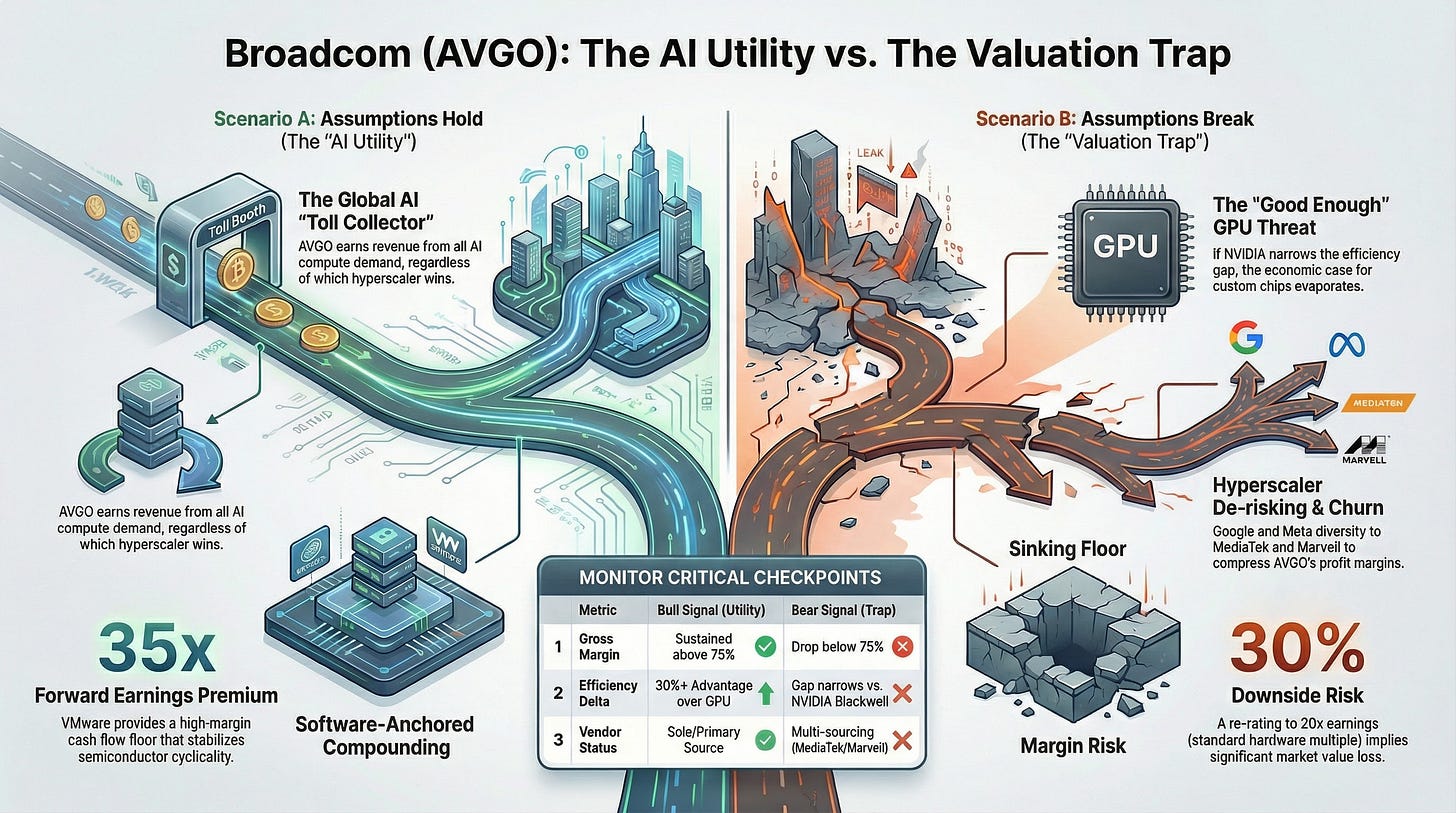

Valuation Reality Check…

At current prices, AVGO trades at roughly 35x forward non-GAAP earnings and around 49x EV/EBITDA (TTM). These metrics reflect how the market is valuing the company’s ongoing earnings power rather than acquisition accounting noise. That’s elevated for a business with substantial semiconductor exposure, but it makes more sense if you buy the software compounder narrative. The implied assumption is that AVGO deserves to trade like Oracle rather than Marvell.

For that underwriting to hold, VMware must continue providing durable margin support, AI revenue must compound at 30% or better, and the inference efficiency delta must remain intact. If all three play out, paying 35x earnings for a business growing revenue north of 20% with gross margins above 75% is defensible.

But if any of them slip, the valuation leaves little room for error. Margin compression or a slowdown in AI growth would likely trigger meaningful multiple contraction. Even a re-rating to 20x earnings, which would still be generous for a hardware-heavy business, implies 30% or more downside from current levels.

Final Thoughts…

AVGO is a well-run company with real advantages. Hyperscaler relationships are embedded, the physical layer moat is meaningful, and VMware adds a powerful software engine when renewal economics hold.

The issue is not whether the consensus has evidence. It does.

The issue is that the current valuation assumes that evidence remains structural, with limited tolerance for slippage in margins, VMware retention, or competitive dynamics.

In other words, the market is already paying for the AI utility narrative to play out cleanly.

That leaves the thesis governed by a small set of observable checkpoints:

Gross margin trajectory: A sustained move below 75% would signal the margin-blend math is shifting.

VMware renewal rates: Any meaningful churn pressure from regulatory probes or pricing backlash weakens the software floor.

NVIDIA’s inference benchmarks: A narrowing efficiency gap reduces the economic case for custom silicon.

Hyperscaler commentary: Signals of vendor diversification (specifically regarding MediaTek/Google or Marvell), second sourcing, or physical-layer internalization matter more than headlines.